Around this time last year, I posted about setting up a

udev rule to run a script

when I plugged my USB drive containing all of my music into one of my

laptops; the script, a

couple of lines of bash,

removes all pre-existing symlinks to $HOME/Music and

repopulates the directory with an updated set. Almost. The one flaw that has

been an irritant of variable intensity, depending on what I felt like listening

at any given time, is that the symlinks aren’t written for directories that

already exist on the target filesystem.

Around this time last year, I posted about setting up a

udev rule to run a script

when I plugged my USB drive containing all of my music into one of my

laptops; the script, a

couple of lines of bash,

removes all pre-existing symlinks to $HOME/Music and

repopulates the directory with an updated set. Almost. The one flaw that has

been an irritant of variable intensity, depending on what I felt like listening

at any given time, is that the symlinks aren’t written for directories that

already exist on the target filesystem.

In order that I am able to play some music if I forget the USB drive, each of

the laptops has a subset of albums on it, depending on the size of their

respective hard drives. If I add a new album to the USB drive, then that change won’t

get written to either of the laptops when the drive is plugged in. Not entirely

satisfactory. I had tinkered around with

globbing, or with

having find(1)

scan deeper into the tree, or even a loop to check for the presence of directories in an array…

It just got too hard. My rudimentary scripting skills and the spectre of recursion, I am sorry to admit, conspired to undermine my resolve. So, rather than concede unconditional surrender, I asked for help. As is almost always the case in these situations, this proved to be a particularly wise move; the response I received was neither what I expected, nor was it anything I was even remotely familiar with: so in addition to an excellent solution (one far better suited to what I was trying to achieve), I learned something new.

The first comment on my question proved singularly insightful.

Care to use union mounts, for example via overlayfs?

A union mount, something until now I was blissfully unaware of, is according to Wikipedia,

a mount that allows several filesystems to be mounted at one time, appearing to be one filesystem.

Union mounting has a long and storied history on Unix, beginning in 1993 with the Inheriting File System (IFS). The genealogy of these mounts has been well covered in this 2010 LWN article by Valerie Aurora. However, it is only in the current kernel, 3.18, that a union mount has been accepted into the kernel tree.

After reading the documentation for overlayfs, it seemed this was exactly what I was looking for. Essentially, an overlay mount would allow me to “merge" the underlying tree (the Music directory on the USB drive) with an “upper” one, $HOME/Music on the laptop, completely seamlessly.

Then whenever a lookup is requested in such a merged directory, the lookup is performed in each actual directory and the combined result is cached in the dentry belonging to the overlay filesystem.

It was the just a matter of adapting my script to use overlayfs, which was

trivial:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 | |

And now, when I plug in the USB drive, the contents of the drive are merged with my local music directory, and I can access whichever album I feel inclined to listen to. I can also copy files across to the local machines, knowing if I update the portable drive, it will no longer mean I have to forego listening to any newer additions by that artist in the future (without manually intervening, anyway).

Overall, this is a lightweight union mount. There is neither a lot of functionality, nor complexity. As the commit note makes clear, this “simplifies the implementation and allows native performance in these cases.” Just note the warning about attempting to write to a mounted underlying filesystem, where the behaviour is described as “undefined”.

Notes

Creative Commons image, mulitlayered jello by Frank Farm.

]]> I started using Tarsnap about

three years ago

and I have been nothing but impressed with it since. It is simple to use,

extremely cost effective and, more than once, it has saved me from myself;

making it easy to retrieve copies of files that I have inadvertently

overwritten or otherwise done stupid things with1. When I

first posted about it,

I included a

simple wrapper script,

which has held up pretty well over that time.

I started using Tarsnap about

three years ago

and I have been nothing but impressed with it since. It is simple to use,

extremely cost effective and, more than once, it has saved me from myself;

making it easy to retrieve copies of files that I have inadvertently

overwritten or otherwise done stupid things with1. When I

first posted about it,

I included a

simple wrapper script,

which has held up pretty well over that time.

One of the easiest ways to contribute to Arch is to maintain a package, or

packages, in the

AUR;

the repository of user contributed PKGBUILDs that extends the range of packages available

for Arch by some magnitude. Given that PKGBUILDs are just shell scripts, the barrier

to entry is relatively low, and investing the small amount of effort required to clear

that barrier will not only give you a much better understanding of how packaging works

in Arch, but will scratch your own itch for a particular package and hopefully assuage

someone else’s similar desire at the same time.

One of the easiest ways to contribute to Arch is to maintain a package, or

packages, in the

AUR;

the repository of user contributed PKGBUILDs that extends the range of packages available

for Arch by some magnitude. Given that PKGBUILDs are just shell scripts, the barrier

to entry is relatively low, and investing the small amount of effort required to clear

that barrier will not only give you a much better understanding of how packaging works

in Arch, but will scratch your own itch for a particular package and hopefully assuage

someone else’s similar desire at the same time.

Due to a rather embarrassing episode in #archlinux a couple of weeks ago,

where I naively shared one of the first bash scripts I had written without

first looking back over it1, and had to subsequently endure what

felt like the ritual

code mocking,

but was in fact some helpful pointers as to how I could make the script suck

less (a lot less) I have been going through those older scripts and applying

the little knowledge that I have picked up in the interim; reappraising the

usefulness of the scripts as I go.

Due to a rather embarrassing episode in #archlinux a couple of weeks ago,

where I naively shared one of the first bash scripts I had written without

first looking back over it1, and had to subsequently endure what

felt like the ritual

code mocking,

but was in fact some helpful pointers as to how I could make the script suck

less (a lot less) I have been going through those older scripts and applying

the little knowledge that I have picked up in the interim; reappraising the

usefulness of the scripts as I go.

One of the real strengths of Arch is its ability to be customised. Not just in

terms of the packages that you choose to install, but how those packages

themselves can be patched, altered or otherwise configured to suit your

workflow and setup. I have posted previously about,

for example, building Vim

or hacking PKGBUILDS.

What makes all this possible is the wonderful

ABS,

the Arch Build System.

One of the real strengths of Arch is its ability to be customised. Not just in

terms of the packages that you choose to install, but how those packages

themselves can be patched, altered or otherwise configured to suit your

workflow and setup. I have posted previously about,

for example, building Vim

or hacking PKGBUILDS.

What makes all this possible is the wonderful

ABS,

the Arch Build System.

After posting last week about

KeePassC as a password manager,

a couple of people immediately commented about a utility billed as “the

standard Unix password manager.” This is definitely one of the reasons I

continue to write up my experiences with free and open source software: as soon

as you think that you have learned something, someone will either offer a

correction or encourage you to explore something else that is similar, related

or interesting for some other tangential reason.

After posting last week about

KeePassC as a password manager,

a couple of people immediately commented about a utility billed as “the

standard Unix password manager.” This is definitely one of the reasons I

continue to write up my experiences with free and open source software: as soon

as you think that you have learned something, someone will either offer a

correction or encourage you to explore something else that is similar, related

or interesting for some other tangential reason.

Managing passwords is a necessary evil. You can choose a number of different strategies

for keeping track of all of your login credentials; from using the same password for every

site which prioritises convenience over

Managing passwords is a necessary evil. You can choose a number of different strategies

for keeping track of all of your login credentials; from using the same password for every

site which prioritises convenience over  It is now almost exactly two years since the AIF

was put out to pasture.

At the time, it caused a degree of consternation, inexplicably causing some to

believe that it presaged the demise of—if not Arch itself, then certainly the

community around it. And I think it would be fair to say that it was the signal

event that launched a number of spin-offs, the first of which from memory was

Archbang; soon followed by a number of others that promised “Arch Linux with an

easy installation,” or something to that effect…

It is now almost exactly two years since the AIF

was put out to pasture.

At the time, it caused a degree of consternation, inexplicably causing some to

believe that it presaged the demise of—if not Arch itself, then certainly the

community around it. And I think it would be fair to say that it was the signal

event that launched a number of spin-offs, the first of which from memory was

Archbang; soon followed by a number of others that promised “Arch Linux with an

easy installation,” or something to that effect…

After relieving my Pi of

seedbox duties,

I was looking around for some other use for it. I decided, after looking over

the Arch wiki article on OpenVPN,

that the Pi would be a terrific VPN

server; when I am out and about I can access a secure connection to my home

network, thereby significantly reducing the risk of my privacy being

compromised while using connectivity to the Internet provided by the

notoriously security conscious sysadmins that run networks in hotels and

other public places.

After relieving my Pi of

seedbox duties,

I was looking around for some other use for it. I decided, after looking over

the Arch wiki article on OpenVPN,

that the Pi would be a terrific VPN

server; when I am out and about I can access a secure connection to my home

network, thereby significantly reducing the risk of my privacy being

compromised while using connectivity to the Internet provided by the

notoriously security conscious sysadmins that run networks in hotels and

other public places.

Vim is not just an editor (and not in the way that

Emacs is more than

just an editor);

it is for all intents and purposes a universal design pattern. The concept of using

Vim’s modes and keybinds extends from

the shell

through to file managers

and browsers.

If you so choose (and I do), then a significant amount of your interactions with your

operating system are mediated by Vim’s design principles. This is undeniably a

good thing™ as it goes some way to standardising your command interface (whether at the

command line or in a GUI).

Vim is not just an editor (and not in the way that

Emacs is more than

just an editor);

it is for all intents and purposes a universal design pattern. The concept of using

Vim’s modes and keybinds extends from

the shell

through to file managers

and browsers.

If you so choose (and I do), then a significant amount of your interactions with your

operating system are mediated by Vim’s design principles. This is undeniably a

good thing™ as it goes some way to standardising your command interface (whether at the

command line or in a GUI).

Using a

Socks proxy over an

SSH tunnel

is a well documented and simple if much less flexible stand in for a full-blown

VPN. It

can provide a degree of comfort when accessing private or sensitive information

over a public Internet connection, or you might use it to get around the

terminally Canutian1 construct that is known as geo-blocking; that

asinine practice of pretending that the Internet observes political boundaries…

Using a

Socks proxy over an

SSH tunnel

is a well documented and simple if much less flexible stand in for a full-blown

VPN. It

can provide a degree of comfort when accessing private or sensitive information

over a public Internet connection, or you might use it to get around the

terminally Canutian1 construct that is known as geo-blocking; that

asinine practice of pretending that the Internet observes political boundaries…

Just over a year ago, I was invited to participate in an exciting Alpha, for

BitTorrent Sync.

I wrote up my experience

and, with one reservation, found it to be a fantastic tool that did pretty much everything I

needed, including letting me finally get rid of Dropbox. That one reservation, although not

entirely clear at the time, was clarified soon after and was crushingly disappointing:

the programme was closed source.

Just over a year ago, I was invited to participate in an exciting Alpha, for

BitTorrent Sync.

I wrote up my experience

and, with one reservation, found it to be a fantastic tool that did pretty much everything I

needed, including letting me finally get rid of Dropbox. That one reservation, although not

entirely clear at the time, was clarified soon after and was crushingly disappointing:

the programme was closed source.

Late last month there was a post on

Meta Stack Overflow wondering why SO is “so negative of late.” Reading through the

extensive list of answers, and all the comments that quickly adhered to them

like barnacles on a becalmed schooner, it was hard not to extrapolate to the

experiences of online communities elsewhere, especially in the free and open

software world. Stack Overflow is going on six years old now and has grown to

be a truly formidable1 resource for programmers, dilettantes—the

category to which I clearly belong—and, apparently, people wanting their

homework done for them. This isn’t the first time this question has been raised

there, and I am sure it won’t be the last.

Late last month there was a post on

Meta Stack Overflow wondering why SO is “so negative of late.” Reading through the

extensive list of answers, and all the comments that quickly adhered to them

like barnacles on a becalmed schooner, it was hard not to extrapolate to the

experiences of online communities elsewhere, especially in the free and open

software world. Stack Overflow is going on six years old now and has grown to

be a truly formidable1 resource for programmers, dilettantes—the

category to which I clearly belong—and, apparently, people wanting their

homework done for them. This isn’t the first time this question has been raised

there, and I am sure it won’t be the last.

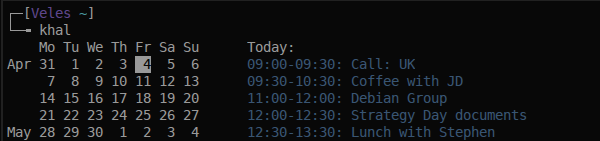

Following up on my post from last week, about using

khal and mutt,

where I covered off a couple of simple hacks to integrate command line

calendaring with Mutt, I had been using

the main development branch of

khal which includes

syncing capability. However, as I was conversing with the developer, Christian,

around

a bug report,

he indicated that this functionality would be superseded by development in a

separate branch that uses

vdirsyncer to

synchronise calendars.

Following up on my post from last week, about using

khal and mutt,

where I covered off a couple of simple hacks to integrate command line

calendaring with Mutt, I had been using

the main development branch of

khal which includes

syncing capability. However, as I was conversing with the developer, Christian,

around

a bug report,

he indicated that this functionality would be superseded by development in a

separate branch that uses

vdirsyncer to

synchronise calendars.

I have posted a few times now about how I use

Mutt1, that most superlative

of email clients. Using a variety of different tools, I have

settled on an effective and satisfying workflow for managing both my personal

and professional email, with one glaring exception: calendaring. This, I should

stress, is not for want of trying. It is not necessarily a nagging concern in

terms of my personal use of email, but professionally it is a daily frustration.

I have posted a few times now about how I use

Mutt1, that most superlative

of email clients. Using a variety of different tools, I have

settled on an effective and satisfying workflow for managing both my personal

and professional email, with one glaring exception: calendaring. This, I should

stress, is not for want of trying. It is not necessarily a nagging concern in

terms of my personal use of email, but professionally it is a daily frustration.

One of the most satisfying aspects of running free and open source software is

the ability to be able to continually tinker with your setup, limited only by

your imagination and ability. The more you do tinker, the smaller the gap

between the former and the latter, as each small project inevitably leads you

into a deeper understanding of various aspects of your system1 and

how you can customize that system to suit your exact requirements.

One of the most satisfying aspects of running free and open source software is

the ability to be able to continually tinker with your setup, limited only by

your imagination and ability. The more you do tinker, the smaller the gap

between the former and the latter, as each small project inevitably leads you

into a deeper understanding of various aspects of your system1 and

how you can customize that system to suit your exact requirements.

The relentless commercialization of traditional holidays, not just the December

variety but all of them now, means that what was ostensibly an occasion for

celebrating your particular flavour of $deity, pagan ritual or just an

opportunity to reconnect with your wider family, has been co-opted to the

worship of the most pernicious of all cults, consumerism.

The relentless commercialization of traditional holidays, not just the December

variety but all of them now, means that what was ostensibly an occasion for

celebrating your particular flavour of $deity, pagan ritual or just an

opportunity to reconnect with your wider family, has been co-opted to the

worship of the most pernicious of all cults, consumerism.

I have

written previously

about

BitTorrent Sync, the encrypted

file syncing application that uses the bittorrent protocol to sync your data

over your LAN, or over the Internet,

using P2P technology.

I have been using it since early this year as a replacement for

dropbox.1

I have

written previously

about

BitTorrent Sync, the encrypted

file syncing application that uses the bittorrent protocol to sync your data

over your LAN, or over the Internet,

using P2P technology.

I have been using it since early this year as a replacement for

dropbox.1

For those people that prefer to forego the full blown

DE and—instead of all the

“convenience” that this sort of setup offers—piece together their setup from a

variety of different tools and knit it all together with some scripting,

automounting external drives is, notwithstanding the inexplicable number of

posts to the forums to the contrary, incredibly straightforward with

udisks.

My approach in this respect is to use

udiskie; it is

lightweight, unobtrusive, configurable and foolproof, as far as I can tell.

For those people that prefer to forego the full blown

DE and—instead of all the

“convenience” that this sort of setup offers—piece together their setup from a

variety of different tools and knit it all together with some scripting,

automounting external drives is, notwithstanding the inexplicable number of

posts to the forums to the contrary, incredibly straightforward with

udisks.

My approach in this respect is to use

udiskie; it is

lightweight, unobtrusive, configurable and foolproof, as far as I can tell.